What is a framing camera?

1 billion fps and beyond

Nearly seventeen years ago Specialised Imaging introduced a framing camera that pushed the boundaries of capturing ultra-high-speed events for cutting edge scientific research applications - where there can be no compromise on speed or accuracy. To understand the technical challenges involved in developing framing cameras we need to look at the fundamental technical principals of what a framing camera is, how it captures high speed events and what aspects should be considered in choosing one.

What is a framing camera?

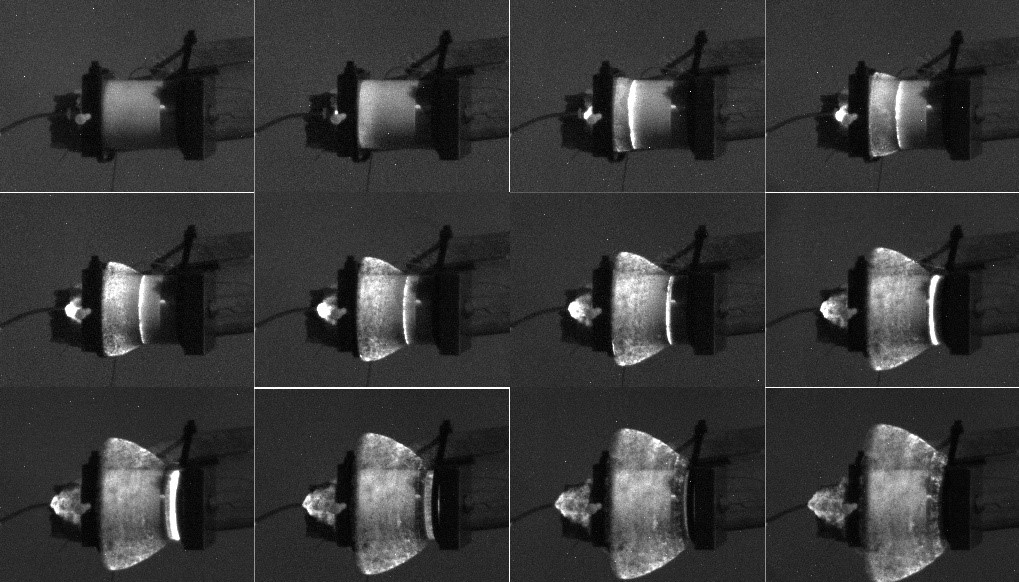

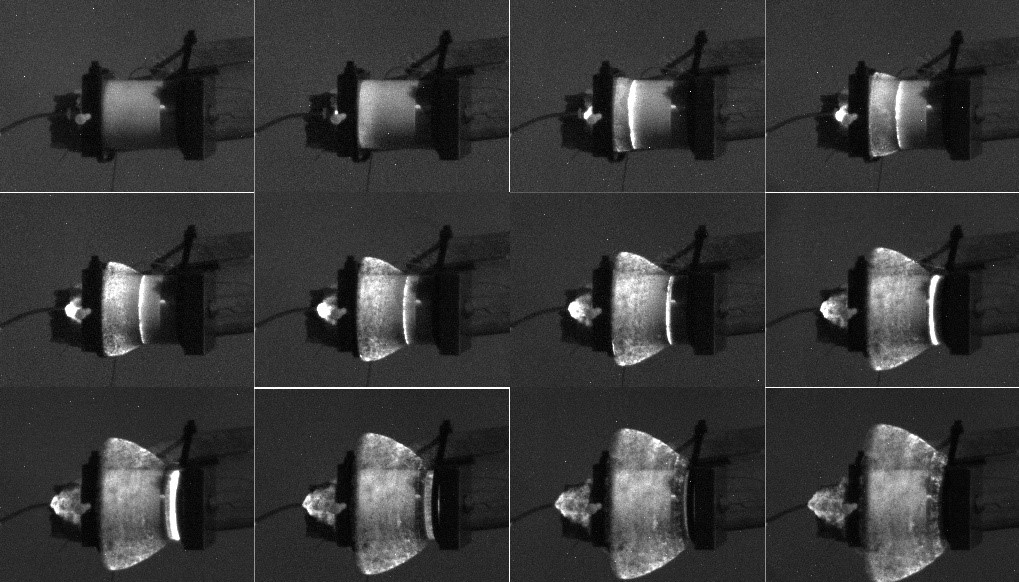

The term framing camera can mean any camera capturing a 2D image but for this discussion it refers to a camera designed to capture a series of consecutive images of events that are typically completed in nanosecond or microsecond timescales. The images captured are used in scientific research to visualise exactly what is happening by slowly replaying the sequence. In some applications quantitative data is extracted from the images using methods such as DIC (digital image correlation). Typical applications are ballistic protection development, stress testing of materials, cavitation and electrical insulator breakdown etc.

How does a framing camera capture high speed events?

Movement is captured by cameras as a series of images. To capture a very short duration event in detail you need to capture as many consecutive images as possible during the event. There are several methods to achieve this, but the most flexible and compact way to achieve the best images is to use a beam splitter, multiple image sensors, and multiple image intensifiers.

Multiple Sensors

Most video cameras, such as those in smartphones, have a single lens with a single sensor behind it. Each image is captured by the sensor, then moved to separate memory allowing the sensor to be ready to capture the next image. Typically, a camera like this can record between 25 and 1000 fps (frames per second).

Framing cameras also use one lens at the front of the camera but have multiple sensors or ICCDs (intensified charged coupled devices) behind the lens – typically up to 16 ICCDs. To ensure identical images are placed upon each separate ICCD an optical beam splitter is used to equally split the input image to create up to 16 images. Each sensor only receives 1/16th of the original light, but the split makes it possible to simultaneously capture 16 images. Rather than all sensors capturing at the same time and producing 16 identical images, the image intensifiers (see below) can be i) switched on/off and ii) offset in time relative to each other with picosecond accuracy. In this way a framing camera can capture more fps than a regular camera. The CCD sensors can only capture one or two images (depending on type) before they need to transfer an image to memory. The transfer time typically takes several milliseconds so only one or two images in an ultra-high-speed sequence are captured per ICCD.

Image Intensifiers

In a framing camera the use of a beam splitter means that each sensor is only getting a fraction of the original available light. In addition to this, events lasting only a few nanoseconds or microseconds require a short exposure time – in the region of a few nanoseconds - to freeze the motion effectively. There are other factors reducing the amount of light reaching the imaging device, but these two are the most significant. To compensate for this, a framing camera uses an image intensifier or photon multiplier in front of the CCD (hence ICCD) to increase the image brightness uniformly and with minimal distortion. More importantly, the image intensifier acts as a very fast shutter, and is used as the means of controlling the exposure timing of each ICCD. Orchestrating and controlling the image intensifiers, which requires Kilovolt levels of voltage, is technically very challenging and potentially dangerous to adjacent electronic circuitry.

How do you compare framing cameras?

The main considerations in selecting the right framing camera for an application are frame rate, record duration, number of frames and resolution, but it is also important to understand what governs the image dynamic range:

1. Frame rate or speed

Capture speed is measured in frames per second (fps). Higher fps allows shorter duration events to be captured using a camera. Conventional high-speed video cameras trade-off image size in pixels to achieve faster frame rates. Framing cameras have a limited number of images but maintain the image size in pixels, so despite having fewer images and inherently shorter record durations than a high-speed video camera, there is no trade-off between speed and image resolution. The ability to independently adjust the exposure beginning and end time for each ICCD relative to the other ICCDs (usually referred to as interframe time) allows irregular timing to be used. For example, if an event is faster at the beginning the interframe time can be shorter between the earlier images and longer interframe times between later images. Independent adjustment also allows the record duration to be changed to suit the event duration. In addition, if the event is brighter or self-illuminating at some point the exposure times of the images occurring at that point can be shorter to avoid over exposure.

2. Record duration

Framing camera record duration is dependent on the fps chosen by the user. Since the number of images is fixed (one or two) per ICCD, a faster the frame rate, reduces the time between the first and last image captured. For example, at 100,000,000 fps, 16 images will provide a record duration of 160nS.

3. Number of frames

The maximum number of images a framing camera can capture in a sequence is limited by the size of the beam splitter before it becomes impractical, and the fact that the imaging sensor (ICCD) can only capture one or two images within the duration of an event. As a general guide the trade-off is frames per second against the number of images. To illustrate this, a Specialised Imaging Kirana high speed video camera offers up to 7 million fps and fixed at 180 images, by comparison a Specialised Imaging SIMD ultra-fast framing camera offers up to 1 billion fps but a maximum of 32 images.

4. Resolution

The resolution performance of a framing camera - which has a significant impact on image quality - is a function of both the sensor resolution, measured in pixels, AND the image intensifier resolution, measured in line pairs per mm (lp/mm). To compare lp/mm with pixel resolution is a simple calculation:

Sensor resolution in lp/mm = 1000 / (2 x Pixel size in microns)

Comparing only the number of sensor pixels in a framing camera specification can be misleading because it is often the resolution and contrast of the intensifier that limits the overall image quality. Typical intensifier resolutions range between 30lp/mm – 50lp/mm. Using the above equation, a sensor with 10μm pixel size equates to 50lp/mm. A camera using a 3Megapixel 6μm (83lp/mm) pixel sensor with a 30lp/mm intensifier will produce inferior resolution images compared with a 1.5Megapixel, 7μm (71lp/mm) pixels size camera using a 50lp/mm intensifier when imaging the same subject. So, no matter how many pixels the sensor contains, the sensor spatial resolution can only be utilised if it is matched in spatial resolution by the intensifier.

5. Dynamic range

Dynamic range is a ratio between the smallest detectable signal and the saturation level of the detector. Dynamic range is measured in dB but more frequently stated in bits, where a 12-bit of dynamic range is 4096 grey steps between black and white. The more dynamic range a camera system has the greater differentiation between light and dark, making it clearer to see detail. There are a number of factors effecting a framing camera’s dynamic range, but the two most significant are the noise and saturation levels of both the sensor and intensifier. While CCD sensors can claim a dynamic range (digitisation) of 12-bits, an image intensifier can provide a realistic dynamic range of between 8bits and 10bits (42dB – 54dB). The overall dynamic range of a framing camera system is limited by both the sensor and intensifier. Comparing only the dynamic range of the camera sensor is not looking at the whole picture.

Further Reading

More detailed information about framing cameras see the application notes below:

https://www.specialised-imaging.com/application/files/8015/4292/7636/SI_RA__SIMD-MJM_IntDetSymp2014_FinalManuscriptRev2.pdf

https://www.specialised-imaging.com/application/files/8915/4292/7609/SI_RA__Cylinder_Expansion_Test_Proposal_v1d.pdf

https://www.specialised-imaging.com/application/files/6215/4293/0140/SI_AP_Streak_camera_imaging_of_C4_Explosive.pdf